Computer architecture refers to the design and organization of a computer system, including the hardware, software, and communication protocols that make up a computer. It is a critical field that plays a vital role in determining the performance and efficiency of a computer. In this essay, we will explore some common questions and answers related to computer architecture.

- What is the difference between a von Neumann and a Harvard architecture?

The von Neumann architecture, also known as the von Neumann model or the von Neumann computer, is a design for a stored-program computer. It was developed by John von Neumann in the 1940s and is characterized by the use of a central processing unit (CPU) that can access both instructions and data stored in memory. This allows the CPU to execute instructions and manipulate data as needed.

On the other hand, the Harvard architecture is a computer architecture that separates the CPU's instruction and data memories, allowing them to operate concurrently. It is characterized by the use of separate memory spaces for instructions and data, and the use of multiple buses to transfer data between the CPU and memory. This allows the CPU to access instructions and data at the same time, improving the performance of the computer.

- What is RISC and CISC architecture?

RISC (Reduced Instruction Set Computing) is a computer architecture that uses a small, highly optimized set of instructions to perform tasks. It is designed to minimize the number of transistors in the CPU and reduce the instruction cycle time. As a result, RISC processors are generally faster and more efficient than their CISC (Complex Instruction Set Computing) counterparts.

CISC processors, on the other hand, use a larger and more complex set of instructions to perform tasks. They are designed to be more flexible and support a wide range of operations, but this comes at the cost of efficiency. CISC processors tend to have longer instruction cycles and require more transistors, which can lead to slower performance.

- What is the purpose of cache memory?

Cache memory is a type of high-speed memory that is used to store frequently accessed data and instructions. It is located between the CPU and main memory and is designed to improve the performance of a computer by reducing the number of accesses to main memory.

When the CPU needs to access data or instructions, it first checks the cache memory to see if the data is already stored there. If it is, the CPU can access the data directly from the cache, which is much faster than accessing main memory. If the data is not in the cache, it is fetched from main memory and stored in the cache for future access.

Cache memory is an important part of computer architecture because it helps to reduce the time it takes for the CPU to access data and instructions, improving the overall performance of the computer.

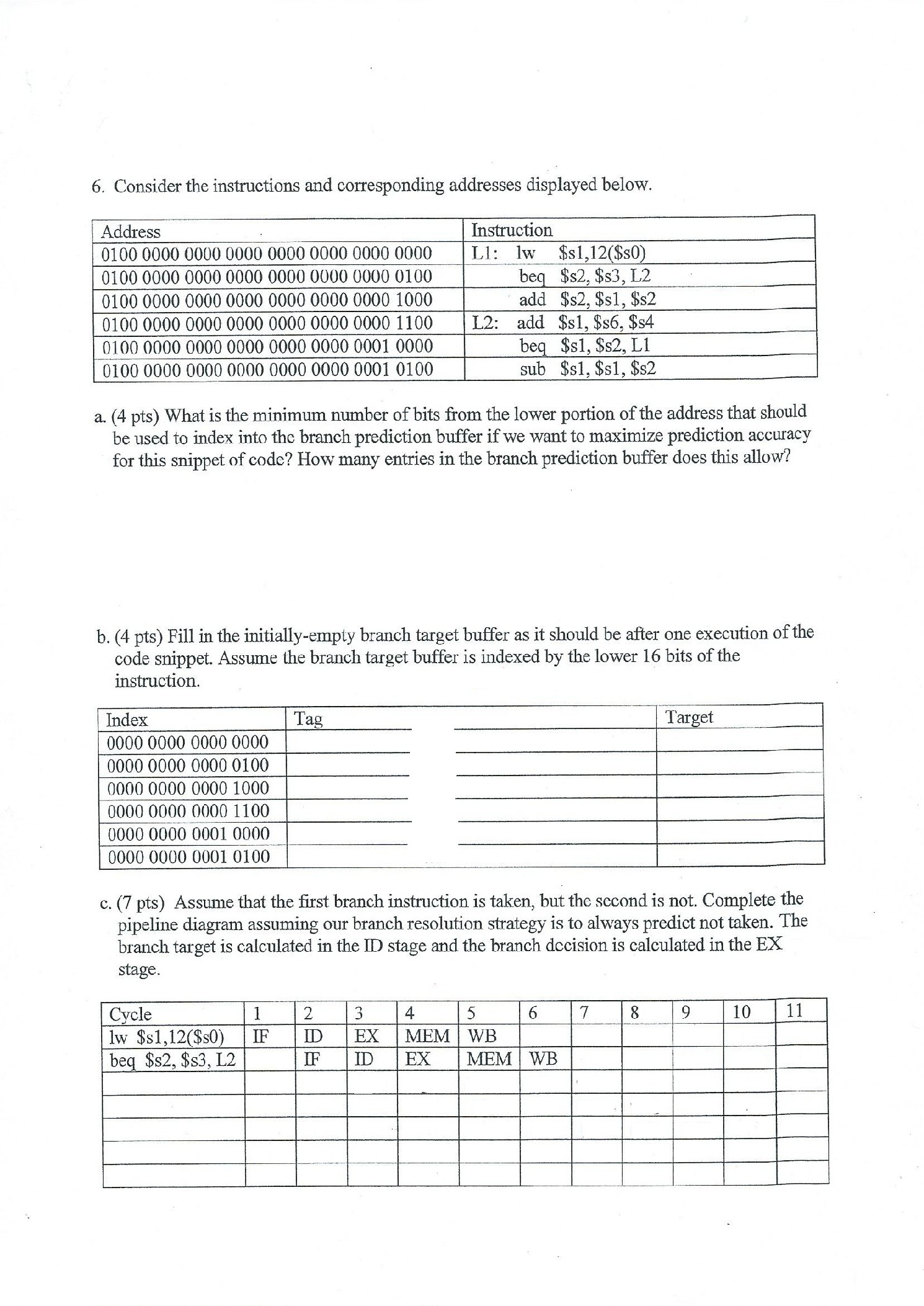

- What is a pipelining and how does it work?

Pipelining is a technique used in computer architecture to improve the performance of a processor by allowing multiple instructions to be processed at the same time. It works by dividing the CPU into a series of stages, each of which performs a specific task.

For example, a five-stage pipeline might have stages for fetching instructions, decoding instructions, executing instructions, memory access, and writeback. As each stage completes its task, it passes the result to the next stage in the pipeline, allowing multiple instructions to be processed in parallel.

Pipelining can significantly improve the performance of a processor by increasing the number of instructions that can be processed per clock cycle. However, it also introduces a certain amount of overhead and complexity, which must be carefully managed to ensure optimal performance.

- What is multi